28. March 2022

It has been four years since the GDPR came into effect. When it appeared, it filled the vacuum of regulation on data protection in the Internet era. Four years later, there is another vacuum of laws regulating the development of AI. Responding to the absence of regulation, the European Commission proposed the AI Act (AIA) in 2021. The AIA is “the first-ever legal framework on AI, which addresses the risks of AI and positions Europe to play a leading role globally” [1].

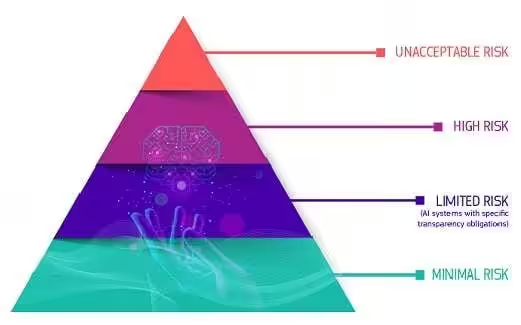

The AI Act categorizes AI use cases into three risk levels, and aims to regulate and minimize the harm of the use cases deemed to have “high risk”, and put a full stop to those categorized as “unacceptable risk”.

AI systems are considered to have unacceptable risk if they are “considered a clear threat to the safety, livelihoods and rights of people” [2], such as social scoring by governments, and toys using voice assistance that encourages dangerous behavior.

High risk AI software contains a wide range of applications, such as those that put the life and health of citizens at risk (e.g. transport), safety components of products (e.g. AI application in robot-assisted surgery), etc… (for a complete list please refer to the European Commission website). In the future, high risk AI software needs to follow a strict set of criteria to be present at the market.

AI systems such as chatbots are considered to have limited risk. The users may not realize they are not talking to real humans if they are not clearly informed beforehand.

Most AI systems currently being used are categorized as “minimal or no risk”. For example, AI-enabled video games or spam filters.

Credit to European Commission

Benefits

The AI Act is an unprecedented regulatory proposal tackling the rapid development of AI, and the absence of laws to regulate it. Through the AI Act, the EU seeks to set a global standard of AI, respecting “laws on fundamental human rights and EU values”. AI use cases that are unlawful and may cause harm will be strictly regulated, or even banned, thus guaranteeing citizens’ fundamental rights.

It also gives AI developers, deployers and users clear rules and criteria to follow when it comes to AI development and application, in pursuit of “reducing administrative and financial burdens for business, in particular small and medium-sized enterprises (SMEs).” [3] Together with follow-up plans, the AI Act will accelerate AI development and innovation in the EU.

Concerns

However, there are many opinions questioning the proposal. Some describe it as the “most restrictive regulation of artificial intelligence (AI) tools”[4], and doubt how realistic it will be for the AIA to bring actual benefit to the Europe economy.

Being AIA-compliant will require a large financial output from companies. According to the EU’s impact assessment, if a small business has one high-risk AI product, it might need a compliance investment of €400,000 to set up a quality management system (QMS) [5]. Companies that do not try to comply with the law will face fines of €30 million, or 6% of global revenue.

Some also worry the AI Act will further hinder European businesses’ competitiveness in the global market. Europe already falls behind the US and China in AI development and adoption. Center for Data Innovation claims that “by 2025, the AIA will have cost the European economy more than €30 billion.” [6] The strict regulations may also result in the reduction of investment on AI in Europe, and encourage entrepreneurs and startups to start business in other regions, where they can fully explore and experiment with their ideas without paying compliance costs.

The AIA sends a clear message of the EU’s determination to regulate the AI market in the years to come. Inevitably, there are voices doubting what impact it will impose on the EU economy. At the same time, others see the AIA as an opportunity to regulate the EU AI market, and facilitate its speedy growth. At the current stage, when the AIA has not yet come into effect, businesses are suggested to plan beforehand to minimize compliance costs, and avoid being non-compliant.

brighter AI’s anonymization solution is the world’s most advanced automatic redaction software for images and videos. Our mission is to protect every identity in public. Being GDPR-compliant, we anonymize the content of the data to prevent individuals from recognition and ensure no data can be referred back to an individual. If you’d like to learn more about our anonymization solution, check out the case studies below, or contact us here.

[1] European Comission; “Shaping Europe’s digital future”; 2021-02-28

[2] European Comission; “Shaping Europe’s digital future”; 2021-02-28

[3] European Comission; “Shaping Europe’s digital future”; 2021-02-28

[4] Benjamin Mueller; Center for Data Innovation; “How Much Will the Artificial Intelligence Act Cost Europe”; 2021-07

[5] European Union; “Study to Support an Impact Assessment of Regulatory Requirements for Artificial Intelligence in Europe”; 2021-04-21

[6] Benjamin Mueller; Center for Data Innovation; “How Much Will the Artificial Intelligence Act Cost Europe”; 2021-07